The single cause fallacy

Simple answers rarely address complex problems

The single cause fallacy (causal oversimplification) is the error of assuming that a complex phenomenon has only one cause when it almost certainly has many.

I’ve been thinking a lot about this fallacy given its pervasiveness in health misinformation. An entire cottage industry has been born from wellness influencers and alt-med businesses claiming vaccines cause nearly all health problems. Or metabolic syndrome. Or leaky gut.

The fallacy is seductive. Simple explanations feel satisfying and actionable: if X caused the problem, we know exactly what to fix. But most real-world events emerge from overlapping, interacting causes. Artificially reducing them to one distorts both understanding and response.

This happened recently in a discussion in which the following claim was made: Nobody wants AI. I’ll address that sentiment in a moment. The fallacy itself is worth unpacking.

Single cause fallacy was coined in the 20th century as informal logic developed, though the error it describes is as old as human reasoning itself.

The fallacy is rooted in ancient philosophical debates about causation. Aristotle’s framework of multiple causes (material, formal, efficient, final) was a response to earlier thinkers who explained natural phenomena through a single underlying principle. Scottish philosopher David Hume formalized the complexity of causal inference just as the Enlightenment was rolling in.

The fallacy has caused enormous harm when it comes to our bodies. Early germ theory sometimes claimed disease was simply a matter of pathogen exposure, ignoring the roles of immune function, nutrition, stress, and social determinants of health. Terrain theory, currently being revived in extreme contrarian wellness circles, swings too far in opposite direction, downplaying the risks of pathogen exposure.

The opioid crisis is a more recent example. Pharmaceutical companies (and some prescribers) pushed a single-cause model of under-treated pain while obscuring addiction risk. After collusion between pharma and providers was revealed, the diagnosis was reversed. A single-cause model emerged that only blamed pharma, overlooking regulatory failure, social isolation, and prescriber culture.

Then there’s Donald Trump blaming immigrants for all of America’s woes, a method proven to incite nationalism in authoritarian regimes.

Humans prefer simple answers to complex problems. A cognitive survival strategy, as weighing every possible variable in every situation is exhausting.

Heuristics were inevitable given our brain’s energy requirements. Unfortunately, we’ve gone too far in the direction of simplicity, a trait that makes us easy marks for exploitation.

There are many rightful concerns about AI.

As a writer, I’m in the crosshairs of job extinction. At the very least, I need to consider the role of writing in the coming years and decades. I’m not downplaying the very real problems AI is forcing society to grapple with.

But what do we mean when using the term AI? We’re usually referring to chatbots and defense tech when discussing doom-and-gloom scenarios. Yet AI is far more than that. Some who claim to not want it don’t realize how integrated AI is into the fabric of society. A (very few) examples include:

Streaming and entertainment. The recommendation engines of Netflix, Spotify, and YouTube are built on machine learning models analyzing behavior

Smartphone cameras. Smartphone photography is almost entirely AI-mediated

Email and messaging. Spam filters, autocomplete suggestions, and autocorrect features across major consumer products run on AI

Navigation. Google Maps and Waze rely on AI for most features

Smart home devices. Alexa, Google Home, and Siri are driven by AI

Health wearables. Apple Watch, Fitbit and Garmin use AI to track health variables and provide updates

To say no one wants AI collapses a complex and contextually variable set of realities into a single monolithic verdict. Sentiment must include numerous overlapping factors, including attitude toward productivity tools, fear of job displacement, distrust of data privacy, and enthusiasm among younger users.

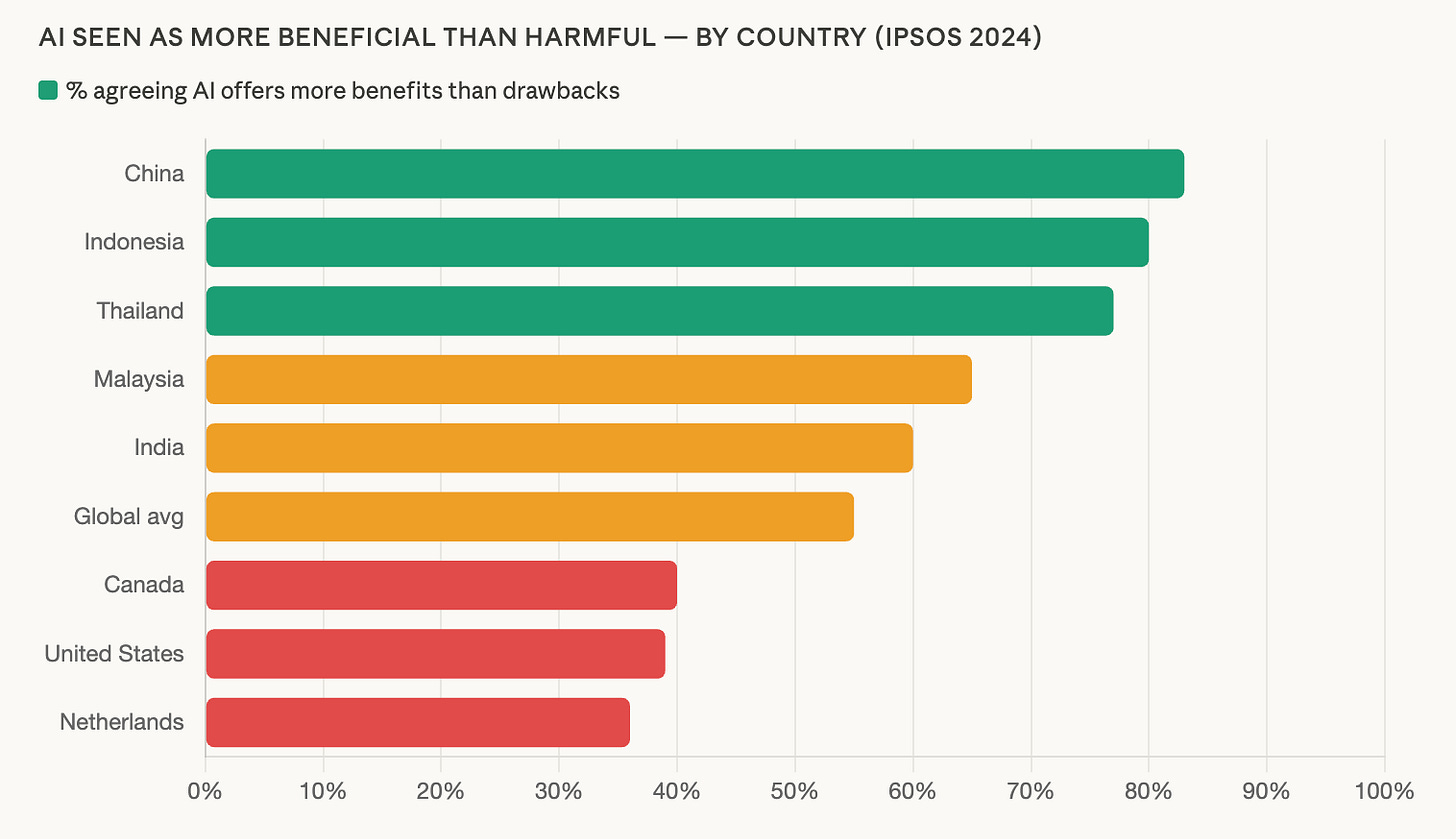

The claim also ignores contrary evidence. In fact, the global average tilts toward AI’s benefits:

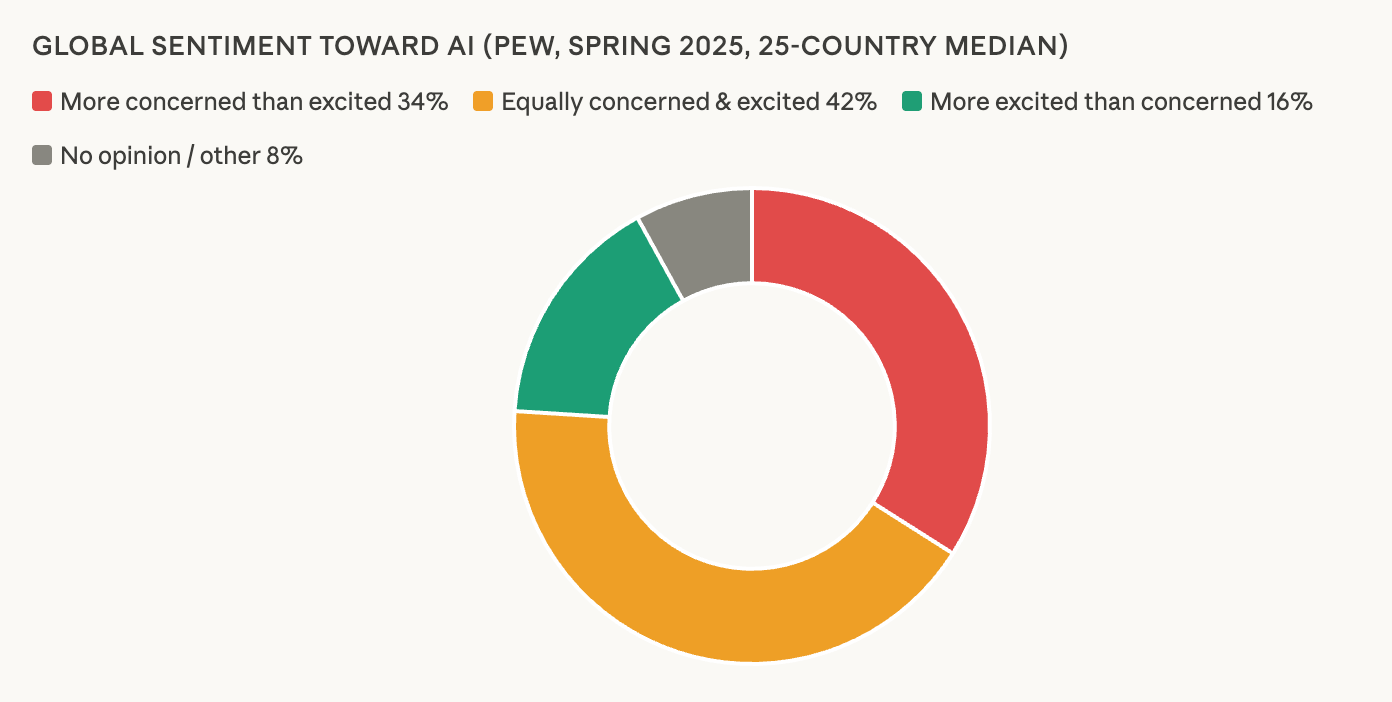

Global feeling also skews toward concern and mixed concern and excitement:

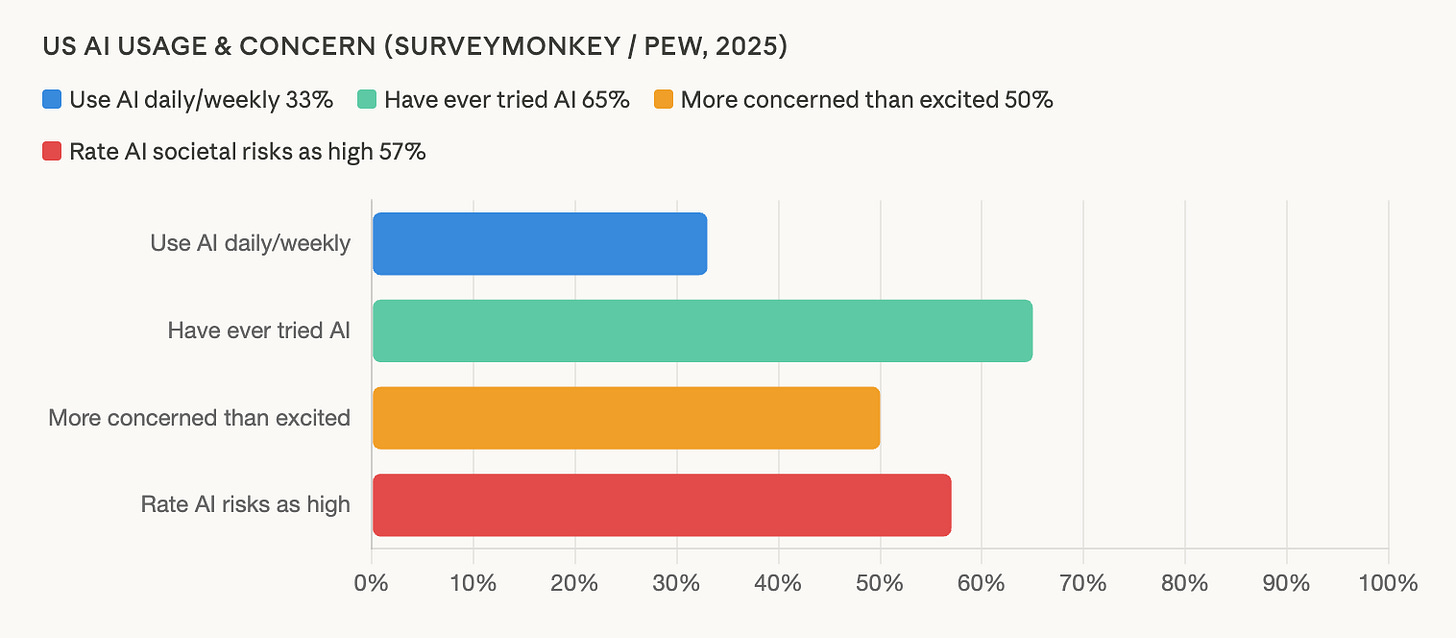

More than half of Americans (including me) think the risks are high:

A final note concerns familiarity. Research consistently finds people who understand AI better tend to view it more favorably. This suggests at least some negative sentiment is driven by unfamiliarity rather than informed rejection.

The top barriers to adoption are lack of need and lack of trust, both of which are understandable. Once you widen your scope about AI’s infiltration in everyday products, the “need” part may shift. And if you’re relying on these products and still claiming the need isn’t there, that’s a contradiction no amount of information is going to resolve.

What to do about totalizing statements, whether in AI, health, or politics? A good practice is to ask “what else?” You don’t need to weigh every variable, but scratching the surface often reveals at least a few are fighting for recognition.

To return to the body: a good diagnosis covers a lot of ground, citing multiple variables that could be at play. Then a detailed roadmap for fighting the problem can be drawn.

Reducing complexity to one variable will likely create blind spots, reducing the possibility of successful recovery. That’s too bad given the information was right there all along.

Thank you Derek. This is a great article and an important exploration. I've been thinking of this a lot lately too, in a related way - how often the singular solution is based in the individual, when often our situations, whatever they may be, are a rich complexity of personal agency and social, economic, and environmental influences. I know you know all about this. I just wonder how we can effectively support people compassionately when capitalism pushes hard on the single solution/everything-is-personal-responsibility model.